Dynamics 365 UO Data task automation is a framework which helps with following features

- Demo Data Setup

- Golden Configuration Setup

- Data Migration Validation

- Data Entities and Integration Test automation

D365 UO: Intrduction to Data Task Automation

Data packages from the Shared Asset Library or Project Asset Library can be downloaded and imported automatically into D365FO using Data Task Automation (DTA), which is available in the Data management workspace. The high level data flow diagram is shown below:

The following image shows the features of Data Task Automation

The automation tasks are configured through a manifest. The following figure shows an example of a DTA manifest file.

The above manifest file can be loaded into Data management and results in the creation of several data automation tasks as shown below.

The combination of data packages and data task automation will allow the users to build a flexible framework that automates the generation of all relevant data in a new deployment from the template and create test cases for recurring Integration testing and normal test cases.

Data Task Automation: The Manifest file

Manifest file provides mechanism to create a test cases / Tasks for Data Task automation.The manifest has two main sections: SharedSetup and Task definition

Data Task Automation: SharedSetup

The Shared setup section defines general task parameters and behaviors for all tasks in the manifest.

1: Data files from LCS : This elements define the data packages and data files that the tasks in the manifest will use. The data files must be either in the LCS asset library of your LCS project or in the Shared asset library.

- Name

- Project ID (LCS Project ID and when its empty it uses access the package from Shared Asset Library)

2: Generic Job Definition: This section defines the data project definition. There can be more than one job definition in a manifest. The test case can override the entity definition in Task level.

The Recurring batch type defined under mode element would help in creating Recurring Integration scenarios. There other types of Modes to simulate different scenarios such as initiating export/import from UI.

3: Generic Entity Definition

This section defines the characteristics of an entity that a task in the manifest will use. There can be more than one definition, one for each entity that is used by the tasks in the manifest. The test case can override the entity definition in Task level

Data Task Automation: Test Group definition

Test groups can be used to organize related tasks in a manifest. There can be more than one test group in a manifest. The TestGroup contains number of test cases. Test Case definition can override the Generic Job, Entity definitions by specifying the values specific to the test case (e.g. DataFile, Mode etc.)

Data Task Automation: Best practices for manifest design

Granularity

- Define the granularity of your manifest on functional use case.

- During the development start with as many manifests, as the project progresses then merge manifests on functional level.

- Consider separation of duties. For example, you might have one manifest for the setup of demo data and another manifest for the setup of the golden configuration for your environment. In this way, you can make sure that team members use only the manifests that they are supposed to use.

Inheritance

- The manifest schema supports inheritance of common elements that will apply to all tasks in the manifest. A task can override a common element to define a unique behavior. The purpose of the Shared setup section is to minimize repetition of configuration elements, so that elements are reused as much as possible. The goal is to keep the manifest concise and clean, to improve maintenance and readability.

Source Code

- Manifests that must be used by all the members of an implementation team should be stored in source control in the Application Object Tree (AOT).

Benefits of Data Task Automation

Data task automation in Microsoft Dynamics 365 for Finance and Operations lets you easily repeat many types of data tasks and validate the outcome of each task. Following lists the benefits of DTA

- Built into the D365 Product – Environment Agnostic / Available to everyone

- Low Code/No Code – Declarative Authoring of Tasks [xml]

- LCS Integration for Test Data

(As Data Packages)

- Shared Asset Library

- Project Asset Library

- Upload Data to Multiple Legal Entities in one-go using DTA

- It can be included to AOT

resources using VS Packages (Dev ALM)

- It will be available in D365FO UI

- It also supports Loading of the Manifest from File system

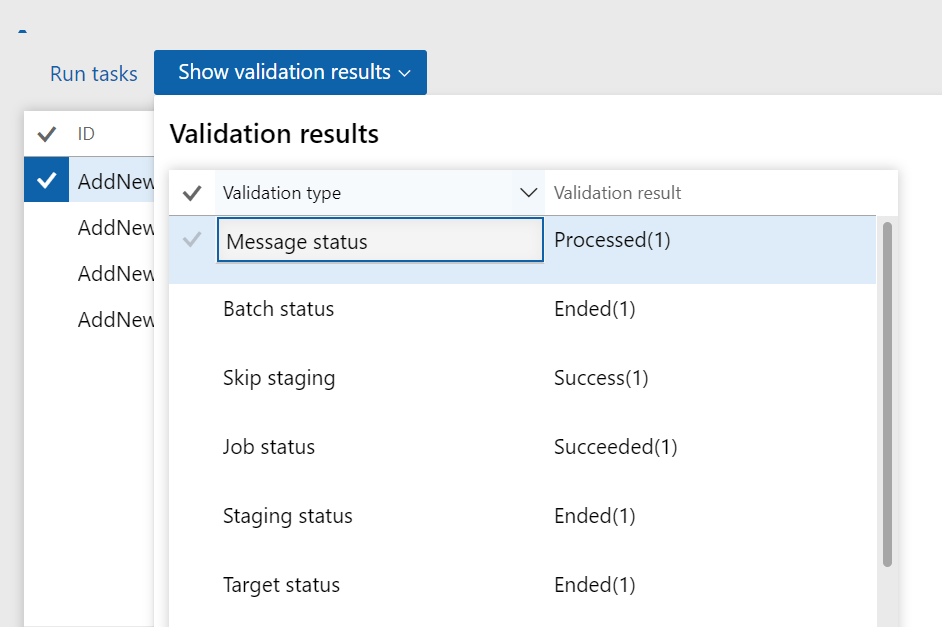

- Good Validations

- Job, batch and Integration status

- Records Count

- Staging and Target status

- Other options

- Skip staging

- Truncations

Steps to Setup Data Task Automation

Create the required Data Packages :Identify the required data for the test cases (Tasks) and create the Data packages.

Upload the Data packages to LCS :Upload the data packages to LCS. Steps to create data packages.

Create the Manifest file for Data Task Automation:Identify the operations and Test cases required to be performed via DTA. Then create Manifest file required for these operations. The detailed information about Manifest can be found here. Store the Manifest file in source control and please use the best practice while designing the Manifest file.

Below is an

example simple manifest file to upload CustomerV3 Entity. It expects a Data

package named “CustomersV3” in LCS (as result of previous two steps). Visual

Studio or VS Code is ideal for development.

<?xml version='1.0' encoding='utf-8'?>

<TestManifest name='HSO-DTA-Testing-Intro'>

<SharedSetup>

<DataFile ID='CustomersV3' name='CustomersV3' assetType='Data package' lcsProjectId='1368270'/>

<JobDefinition ID='GenericIntegrationTestDefinition'>

<Operation>Import</Operation>

<SkipStaging>Yes</SkipStaging>

<Truncate></Truncate>

<Mode>Recurring batch</Mode>

<BatchFrequencyInMinutes>1</BatchFrequencyInMinutes>

<NumberOfTimesToRunBatch >2</NumberOfTimesToRunBatch>

<UploadFrequencyInSeconds>1</UploadFrequencyInSeconds>

<TotalNumberOfTimesToUploadFile>1</TotalNumberOfTimesToUploadFile>

<SupportedDataSourceType>Package</SupportedDataSourceType>

<ProcessMessagesInOrder>No</ProcessMessagesInOrder>

<PreventUploadWhenZeroRecords>No</PreventUploadWhenZeroRecords>

<UseCompanyFromMessage>Yes</UseCompanyFromMessage>

<LegalEntity>DAT</LegalEntity>

</JobDefinition>

<EntitySetup ID='Generic'>

<Entity name='*'>

<SourceDataFormatName>Package</SourceDataFormatName>

<ChangeTracking></ChangeTracking>

<PublishToBYOD></PublishToBYOD>

<DefaultRefreshType>Full push only</DefaultRefreshType>

<ExcelWorkSheetName></ExcelWorkSheetName>

<SelectFields>All fields</SelectFields>

<SetBasedProcessing></SetBasedProcessing>

<FailBatchOnErrorForExecutionUnit>No</FailBatchOnErrorForExecutionUnit>

<FailBatchOnErrorForLevel>No</FailBatchOnErrorForLevel>

<FailBatchOnErrorForSequence>No</FailBatchOnErrorForSequence>

<ParallelProcessing>

<Threshold></Threshold>

<TaskCount></TaskCount>

</ParallelProcessing>

<!-- <MappingDetail StagingFieldName='devLNFN' AutoGenerate='Yes' AutoDefault='No' DefaultValue='' IgnoreBlankValues='No' TextQualifier='No' UseEnumLabel='No'/> --></Entity>

</EntitySetup>

</SharedSetup>

<TestGroup name='Manage Integration Test for Entities'>

<TestCase Title='Adding New Customer via Integration' ID='AddNewCustomeViaIntegration' RepeatCount='1' TraceParser='on' TimeOut='20'>

<DataFile RefID='CustomersV3' />

<JobDefinition RefID='GenericIntegrationTestDefinition'/>

<EntitySetup RefID='Generic' />

</TestCase>

</TestGroup>

</TestManifest> Upload Manifest to Dynamics 365 UO

1. Log into D365 FO portal

2. Navigate to Data management from the workspace:

3. Navigate to DTA by clicking on Data Tool Automation

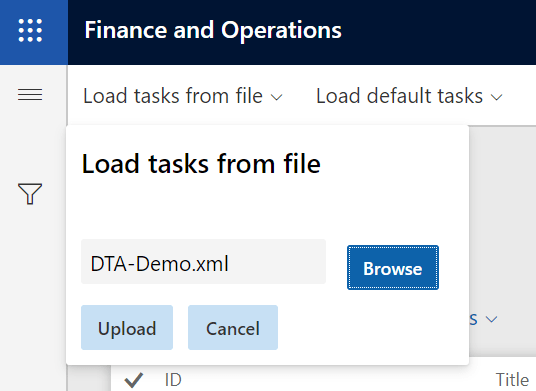

4. Click Load Tasks from the file on Top left corner

5. Select and Upload the Manifest xml

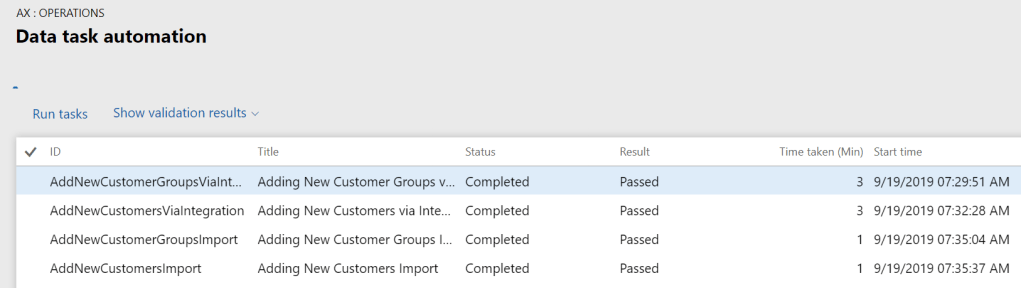

6. Upload would List all the tasks which are defined in the Manifest and select All and click Run.

7. This would result in the dialog and Click on “Click here to Connect to Lifecycle service” and this should result in success.

8. Then click OK

9. The Task will start running and the outcome of the test cases will be shown once the task is complete

10. Select the Task/Test case and click on the “Show validation results” to see the detailed results of the test case.

Summary of Data Task Automation

- The DTA is a great way to automate the test cases during development.

- It provides recurring mechanism to Test Data Entities using data packages.

- The business logic associated with Entities are executed during DTA.

- DTA also helps with Performance baselining and regression testing.

- It still requires improvements especially in the validation Phase. The outcome only says whether the Record has been added successfully but it doesn’t say anything about integrity of the data

- The DTA Test cases are not yet integrated with DevOps but it is already part of ALM

- Driving Solution Delivery Excellence: Achieving Repeatable, High-Quality Delivery with Custom AI Agent Skills

- The Integration Gap: Why 95% of Enterprise AI Projects Fail and How Azure Integration Services Solves It

- Microsoft Copilot Studio vs Azure AI Studio: A Feature Overview

- Leveraging Azure OpenAI and Cognitive Search for Enterprise AI Applications

- Logging and Monitoring strategy for Azure Integration Components using OpenAI

- Azure Logic Apps Standard:Developer-Friendly Integration Design Rules

One thought on “Data Task automation in D365 Finance and Operations”